SecLint: An Agentic Code Vulnerability Scanner

Context‑Aware, Agent‑Driven Security Scanning That Scales Beyond Manual Reviews

GitHub Repo - SecLint GitHub repository (Har1sh-k/SecLint).

I built SecLint to address a frustrating gap in code security - “Manual code reviews”. Manual code reviews are valuable but “slow, error-prone, and don’t scale”. Rule-based static analyzers (SAST) offer speed, but flood you with generic alerts and false positives. Worse, these tools often lack contextual understanding, relying solely on predefined rule sets. I realized that neither manual nor traditional tools were enough, and vanilla LLMs by themselves could just hallucinate issues without solid grounding.

SecLint:

A retrieval-augmented generation (RAG) pipeline to ground the LLM in security know-how. SecLint is in fact described as “a Python-based AI agent for detecting insecure code patterns” by leveraging RAG with OpenAI embeddings and ChromaDB. In practice, this means the agent retrieves relevant secure‑coding guidance before analysis, then generates remediation steps based on both the snippet’s context and best practices(ReAct pattern). This way, the AI won’t just guess; it “consults a live knowledge source” to avoid hallucinating. I stored OWASP’s secure-coding guidelines, a “technology agnostic set of general software security coding practices”, in a local ChromaDB vector store using OpenAI embeddings, so the LLM always has authoritative best-practices to reference.

SecLint’s Pipeline

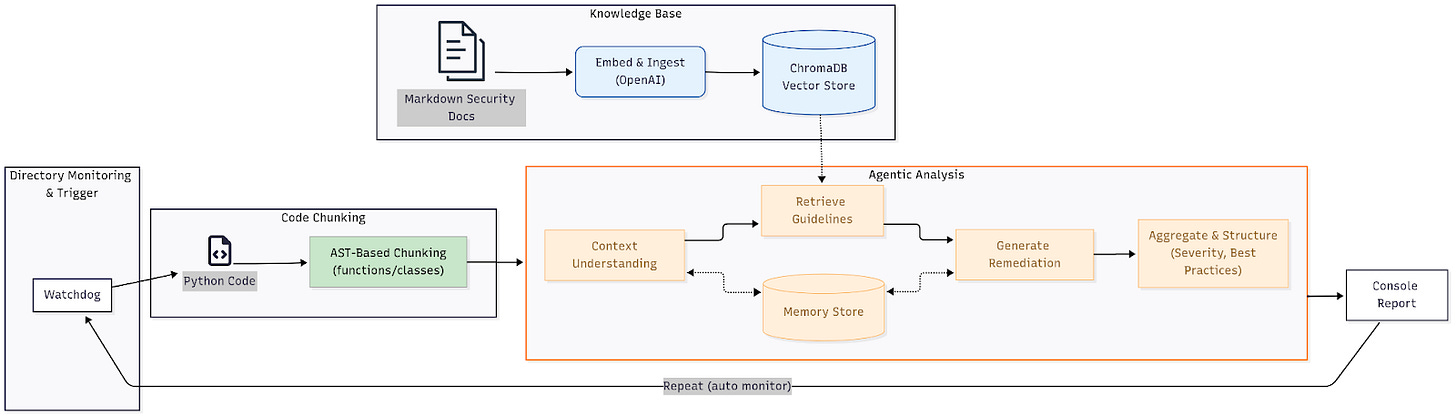

Figure: SecLint’s pipeline. A filesystem watcher (Watchdog) triggers on changes, the code is parsed by AST, relevant OWASP guidelines are fetched via a ChromaDB vector store, and an LLM (via LangChain) analyzes each chunk to suggest fixes.

The above diagram shows the SecLint workflow. Whenever a Python file is saved, a Watchdog listener kicks off the process. Watchdog is a cross-platform Python library that “allows you to generate a trigger in response to any changes in the file system being monitored”, so it reliably fires when code changes. Next, SecLint uses Python’s built-in ast module to parse the file into an Abstract Syntax Tree. The AST “helps Python applications to process trees of the Python abstract syntax grammar”. In other words, the code is split into logical chunks (functions, classes, global code) without executing anything, which preserves structure and context for analysis. This split is usually done based on lines or tokens but since this is code data the logic must be preserved even if we split. So I used a AST base splitter so that the logic is not tampered with and gets best possible results

Each code chunk then enters the RAG pipeline. I use LangChain to orchestrate GPT-4 calls and retrieval. LangChain “provides a flexible and scalable platform for building advanced language models, making it an ideal choice for implementing RAG”. In practice, the agent does three things with each snippet: (1) It uses the LLM to understand and summarize the code’s context; (2) It performs a semantic search in the ChromaDB vector store (built from OWASP docs) to fetch relevant secure-coding guidelines; (3) It prompts the LLM to generate JSON-formatted remediation steps that combine the context and retrieved best practices. The result of all this is collected into a report entry per snippet.

Directory Watching (Watchdog): SecLint uses Watchdog to monitor the target folder. When it detects a new or saved

.pyfile, it triggers the analysis in real time. This means scanning happens automatically as you code. This directory is retrieved from theconfig.yamlfile. You can also set the target directory to parent directory it also monitors for the sub directories.AST-Based Chunking: Using Python’s

ast.parse, SecLint converts the code into an AST. As discussed above the AST module “helps Python applications to process trees of the Python abstract syntax grammar”. This makes it easy to isolate each function, class, or global statement as a separate chunk while retaining the logical flow of the code.Knowledge Base (ChromaDB + OWASP): The tool builds a local RAG database from OWASP’s secure coding guidelines. These guidelines (as OWASP notes, a “technology agnostic set of general software security coding practices”) were written in Markdown and then embedded via OpenAI’s embeddings API into ChromaDB. During analysis, SecLint queries this database for best-practice guidelines relevant to the current code, grounding the LLM’s suggestions in authoritative guidance.

Contextual LLM Analysis (LangChain + GPT-4/o4-mini-high): I used LangChain to chain together the LLM steps. The agent first summarizes what a code chunk does, stores the context in memory store, then runs a similarity search for secure coding rules, and finally prompts GPT-4/o4-mini-high to produce a detailed fix. Using LangChain’s ReAct-style tools, SecLint basically “reasons” about the code piece by piece. The LLM sees the code, the retrieved OWASP context, and a system prompt that guides it to explain and fix the issue.

Output Report: SecLint aggregates results per code chunk and prints a structured report to the console. For each snippet it lists a brief Context description, a Severity level, the relevant Best Practices from OWASP, and a Recommendation Summary. In short, you get an easy-to-read security review with actionable advice.

Building the pipeline involved many tech choices. Watchdog and Python’s AST module both worked smoothly (no need to reinvent the wheel). ChromaDB handled indexing the OWASP docs quickly. LangChain made orchestrating multiple LLM calls fairly straightforward, though writing clear prompts and managing token budgets took trial and error. But overall this setup – a RAG pipeline with GPT-4 and OWASP data – proved powerful and surprisingly precise for real-time scanning.

Outperforming Traditional Static Analysis

SecLint goes beyond simple linting by actually reasoning about code rather than just pattern‑matching. Traditional SAST tools look for fixed patterns and can’t explain why something is wrong; by contrast, SecLint understands context and ties issues back to best practices. As the project README highlights, “Unlike traditional static code analyzers, SecLint leverages a contextual LLM-driven RAG pipeline”. In practice this means SecLint can explain why a line is vulnerable and how to fix it, not just flag it.

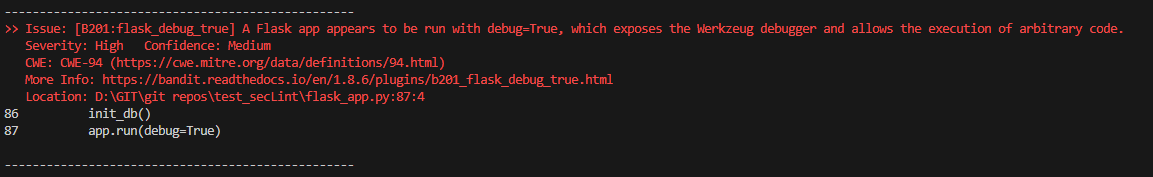

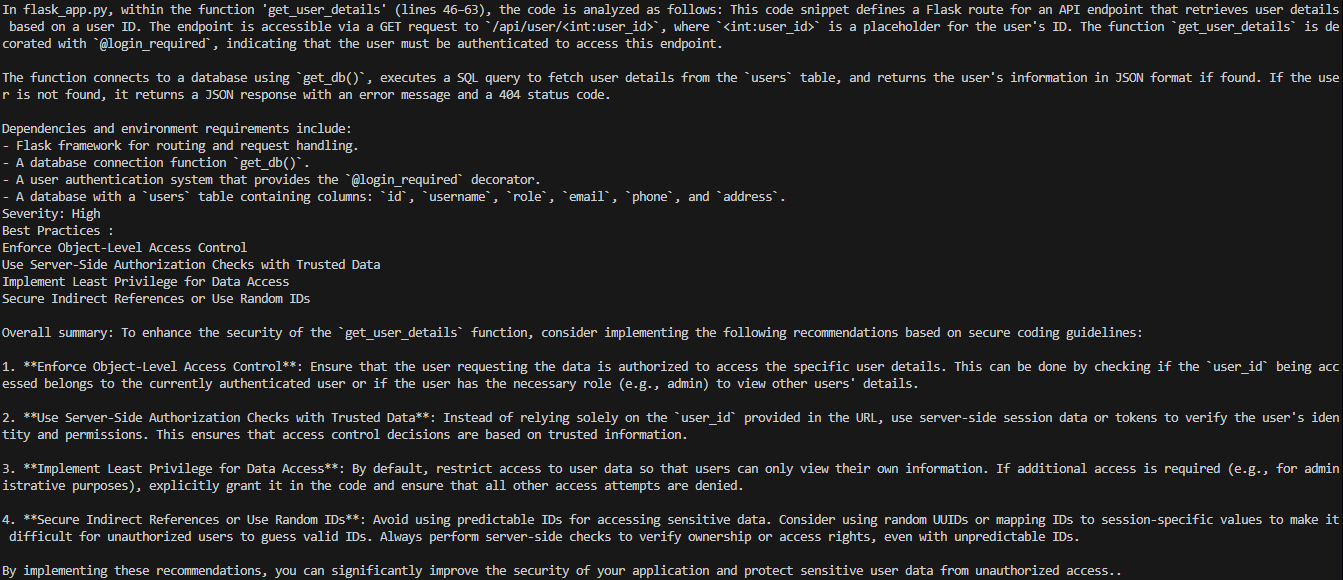

For example, when inspecting the app.run(debug=True) call, Bandit simply flags a B201: flask_debug_true issue at line 87, notes High severity with Medium confidence, and points to CWE‑94 and its plugin docs. SecLint, by contrast, examines the same lines in the __main__ block, explains that the code is initializing the database and launching the Flask server in debug mode, retrieves OWASP best practices (disable debug in production, separate dev/prod settings, limit log detail, sanitize logs), and then synthesizes four actionable remediation steps—complete with severity labels and a concise summary.

SecLint Output:

Bandit Output:

While Bandit misses deeper logic flaws entirely, SecLint catches them too. In the get_user_details endpoint, any authenticated user can supply an arbitrary ID and retrieve another user’s record. Bandit issues no warning here, whereas SecLint detects the absence of object‑level access control, warns that the route allows unauthorized data exposure, and recommends server‑side authorization checks, strict least‑privilege data access, and the use of indirect or randomized references.

SecLint Output:

This kind of contextual guidance is far richer than a static checker’s generic warning. By cross-checking each finding against OWASP guidelines and using the LLM to reason, SecLint effectively filters out many false alarms and provides actionable fixes. In my tests, it produced more focused, relevant results than a typical SAST run. It also often catches subtle logic issues that static rules would miss.

In summary, SecLint complements static analysis by adding human-like understanding. It reasons with code, rather than blindly matching patterns. This is exactly what traditional tools can’t do – hence its name, SecLint (lint as in code inspection). The result is security feedback that developers find more useful and easier to act on.

Tested against the vulnerable app at anshumanbh/vulnapp.

Conclusion and Future Work

SecLint is a working prototype, but it shows how RAG can improve code security scans. By combining Watchdog, Python AST parsing, OpenAI embeddings, ChromaDB, and a LangChain-driven LLM agent, it provides context-aware vulnerability checks that go beyond static rules. The key lesson: give the AI a knowledge base of best practices (OWASP) and it becomes a far more accurate security assistant.

Looking ahead, I plan to polish and extend SecLint. Possible next steps include:

SAST Integration: Combine deterministic (SAST) with non-deterministic (AI‑driven) approaches.

Multi-Language Support: Extending beyond Python to other languages like JavaScript, Java, or Go by adjusting the AST/parsing step and adding language-specific guidance.

CI/CD Hooks: Integrating SecLint into build pipelines so that pull requests are automatically scanned, and findings are posted as comments or issues.

Knowledge Base Expansion: Adding more security docs (e.g. SANS guides, CERT rules, or project-specific policies) to the RAG store, and possibly allowing custom corpora for specialized domains.

Prompt Refinement: Use declarative, self‑improving framework like DSPy to iteratively refine LLM prompts to improve the accuracy and consistency of generated advice.

The goal is a seamless developer tool that treats security as part of the normal workflow, not a separate chore. With the core RAG pipeline in place (diagram shown above), SecLint already demonstrates better contextual analysis than conventional scanners. Future improvements will focus on SAST Integration, but the underlying architecture – a contextual LLM + knowledge base – would remain the same.

Acknowledgments

Special thanks to Anshuman Bhartiya and Avradeep Bhattacharya for the idea and guidance.

See also: SecLint GitHub repository (Har1sh-k/SecLint).

References: The ideas and claims above are supported by sources on RAG and code review practices.